Learning in the Age of AI: The WashU Student Perspective

On Friday, February 6, the CTL had the pleasure of hosting four incredible WashU students (including two of our very own Peer Coaches from The Learning Center!) for a conversation about what it is like to be a student learning in the age of AI. These students included Natalia, a junior majoring in finance and economics (who joined us from her study abroad in Madrid!); Levi, a first-year student majoring in philosophy; Bella, a senior who is double-majoring in Latin American and global studies with a focus on public health and computer science; and Erica, a student in the post-baccalaureate premedical program with a background in technology and design. The students offered viewpoints that were nuanced, enlightening, and inspiring, including perspectives about how AI is actually being used, its impact on critical thinking, and the ethical dilemmas it creates for both students and faculty.

Diverse Approaches to AI Use

It was clear from our conversation that student engagement with AI is far from uniform. Natalia mentioned using AI regularly for “brain dumps” where she describes her ideas and needs and the AI will help her organize her tasks. She mentioned it was particularly useful in planning travel itineraries while studying abroad, using it recently to plan a trip to Rome to view specific attractions at ideal times and on a budget. In contrast, Levi avoids AI entirely. He mentioned that he feels he would “be cheating [him]self” even using it for writing an email and that it does more harm than good overall.

Bella mentioned that while she does use AI for her research (e.g.: data sequencing), she is “very careful about the kinds of AI that [she uses.].” She prioritizes use of more specialized “smaller AI models” (e.g.: those specific to the healthcare industry) and intentionally avoids general tools like ChatGPT due to ethical and environmental concerns. Erica primarily uses large language models for grammar assistance, noting that they are very helpful in this respect, but that she has encountered several instances where an AI model has provided inaccurate information when asked questions. For this reason, Erica emphasized that understanding a model’s purpose and function is essential; for example, she said, large language models should not be used as a substitutes for a search engines, as that is not their function or purpose. Additionally, Erica agreed with Bella that choosing a more specific, task-based model is ideal.

Learning vs. “Outsourcing Thinking”

A central theme of the discussion was how AI affects the learning process. For the most part, students felt that whether AI helped or hindered learning was situational. Bella noted its benefits in finding issues with code – “finding things that are difficult for the naked eye to detect after hours of staring at a computer screen.” Erica described using chatbots for rubberducking, a technique originally described by Andrew Hunt and David Thomas in The Pragmatic Programmer (1999) and described by Wikipedia as “wherein a programmer explains their code, step by step, in natural language—either aloud or in writing—to reveal mistakes and misunderstandings.” Erica mentioned that this helped her check her own knowledge, somewhat ironically, by detecting mistakes or inaccuracies that the chatbot would produce.

While some applications of AI were mentioned as potentially useful in learning, all of the students expressed concern over the many ways it could detract from learning. Levi mentioned that his biggest fear is an “inability to think.” He expressed concern that AI is “the beginning to an end of thinking” and risks inhibiting the high-level communication necessary for effective and compassionate societal function. All students mentioned the risks of hallucination, or AI producing inaccurate or misleading information, and the need for users to have skills that enable them to critically evaluate AI output. Natalia mentioned her concerns for younger students, for example her cousins in elementary school, who must learn to navigate these issues “during such a pivotal time in their learning.” Erica mentioned that while AI makes it more likely to encounter misleading or incorrect information, these issues are not necessarily specific to AI tools: “the same thing could be said about […] Wikipedia. Did a human write it? Is it a good source?” She emphasized how important it is to build skills in finding and evaluating primary sources generally and that these skills should not be restricted to conversations about AI, or digital, literacies.

The Return of the Tech-Free Classroom

Recently, StudLife published an article discussing the rise in digital device bans in classrooms within the Arts & Sciences. Another focused on the value of hand-written notes. Thus, I asked the students their opinions about this growing trend. Bella noted that five of her six current classes are tech-free, which “would have blown [her] mind” when she first came to WashU, in part due to many classrooms being supplemented with technologies in response to the COVID pandemic. However, she expressed enjoying the shift to tech-free spaces. She has noticed “increased participation and engagement” and “really great contributions” from her classmates “without the distraction of technology.” Natalia mentioned the benefits of reduced distraction, noting that when her “computer [is] up, it’s not just my tab with my notes, it’s my tab with my notes and then my calendar and then my email and then I get a text notification and then I’m texting all the time and it’s just a lot.” She said that in “the classes where I can have like my computer down and I have like a pen and paper, I am more engaged. Like I’m more often going to ask more questions because I’m paying attention and there’s not really anything else that’s like taking up my time.” Levi also touted the benefits of tech-free classrooms, noting that the elimination of distraction not only from personal devices but from being able to view classmates’ use of personal devices has resulted in increased ability to actively listen during class. He emphasized the value of tech-free classrooms especially when students are developing foundational reading and writing skills.

Erica mentioned that while she agreed with the benefits of tech-free classrooms, she has historically been unable to take notes at an effective pace. When accommodations for a notetaker were granted by the accessibility office, it was recommended that she use an AI notetaker. Both Bella and Erica noted their concerns with the challenges that tech-free classrooms can pose for accommodations in which technology has been transformative.

Additionally, Bella noted that tech-free classrooms can potentially pose a financial burden to students. She mentioned her experience with a heavy reading class that required all notes and assigned readings to be printed and brought to class. Printing costs for this class exceeded the annual student quota, resulting in additional out-of-pocket costs. She mentioned that while she felt that having a physical copy of her notes and the readings was helpful and enriched her experience in the course, the financial burden was notable and she was concerned about the environmental impact of increased printing.

Regarding Instructor Use of AI

A question from the audience via Q&A asked about the students’ opinions of instructors using AI. All mentioned that they felt that instructors should be held to the same standards as students. One particular concern was a rise in recommendation letters being flagged as “AI generated” and returned to students for confirmation. Students mentioned the impact this practice had on student-professor trust and relationships, as well as concerns for how this could impact students’ future careers. Bella mentioned that “hopefully we could build a community at a place like WashU in which that trusting relationship between professors and students is there and that […] if you’re using [AI,] we also want to learn from you … as to what’s beneficial and valuable.” Natalia discussed concerns with using AI to grade student work, mentioning that this practice led to her feeling discouraged, especially when feedback was not relevant, contextual, personalized, or overly general resulting in it being unhelpful. Levi mentioned that using AI “as a shortcut” as a professor would be breaking a promise to students: “being a professor is tough but they are making a promise to the students to give them the best education possible and I don’t think AI really plays a role in that.”

Erica mentioned the importance of clarifying the utility of AI when applicable to specific fields. She mentioned the importance of avoiding generalizations regarding the use of AI as inappropriate when it is a necessary tool for many fields. She also mentioned that several fields work to mitigate the negative impacts of generative AI, for example addressing the issue of intellectual property. She encouraged faculty to engage with AI when it makes sense, especially to their field.

AI Temptations

Another audience Q&A question asked “have you yourself or anyone you’ve known ever felt tempted to use AI in a way that was not necessarily allowed in class? If so, what about the assignment made AI tempting and how was it resolved?” All students mentioned being tempted, and that these temptations arose from a combination of time management, lack of confidence, not understanding an instructor’s explanation, and/or being overwhelmed with responsibilities.

Bella mentioned that AI was most tempting when she was unsure of the value or utility of an assignment: “I feel like some of those assignments, I’m like ‘I don’t know why I’m being asked to do this.’ … I’m most frustrated by having to do … assignment[s] that feel tedious or like the instructor isn’t necessarily asking me something … that I could contribute. It’s more like ‘make sure you answer these 10 questions in this order.’ And … Google could do the same thing. Like sometimes it feels like the assignment is just to get the points in for the grade. So, oftentimes, I wish that I wasn’t doing those smaller points assignments and that I was actually just asked to maybe contribute something a little bit more valuable to those classes because that’s something that I feel like a Chat GPT can do so easily and it takes it, you know, 30 seconds and me, you know, 20 minutes that I could spend at work or doing other things. And so I think maybe those are the types of assignments where it gets frustrating because you’re like, well, if an AI model could do it, then why are you asking me to do it?” Bella also mentioned the significant struggle with large quantities of reading assignments and major projects or exams all being scheduled at the same time combined with faculty being inflexible with moving deadlines.

Natalia mentioned temptation arising from seeing other students use generative AI and the potential for this to lead to better grades and less time spent on assignments, freeing up time for extracurriculars. Levi added that students often “over-involve themselves” by joining clubs, affinity groups, and organizations which can take time away from learning. He mentioned that the onus is on students to ask themselves, “am I here to be a part of as many clubs as possible or am I here for an education?” He mentioned that students need to prioritize learning in their time management.

Considerations for Faculty

To culminate the conversation, I asked students “what do you wish your instructors understood about navigating college right now?”

Bella reiterated that she hopes faculty consider accessibility and cost when considering how they approach AI in the classroom, in addition to widespread acknowledgement of the ethics and morality of the tool (mentioning “the mass quantities of water it uses,” “horrific human rights violations,” and concerns over “who its takes forward and who it leaves behind”). However, she encourages faculty to “not [get] discourage[d] … with how fast everything is moving… because we have felt this way about many things and I think there will be good that comes out of it. Maybe I’m just an optimist but I think there is good that’s already coming out of it and there will continue to be as long as we find ways to sort of legislate… and build boundaries… surrounding the technology growth.” To this point, she suggested reading Dr. Amy Orben’s article The Sisyphean Cycle of Technology Panics in Perspectives on Psychological Science (2020).

Levi encouraged professors to have high expectations for students in terms of reading endurance, reading comprehension, and writing, but to also be patient because “it’s not going to be an overnight thing.” He also emphasized the importance of not “denying AI” (mentioning that this would be doomed to fail) but instead leveraging it to one’s advantage and avoiding using it to outsource thinking. However, he encouraged faculty to prioritize the development of foundational skills that will enable students to critically evaluate information, or “engage with the world at a high level.”

Natalia shared the experience of an instructor who, despite the class being tech-free, taught students how to effectively ask questions of AI. “He kind of taught us how to ask questions and like recognize when something’s not right and like how to figure out if it’s just something that it’s pulling out of like its algorithm or you know if it’s actually something that could be true.” She mentioned the value of this activity being that it demonstrated some of the capabilities and limitations of AI in terms of its utility in the course.

Erica discussed her concerns about the potential for AI to increase output efficiency and resultant increases in productivity expectations. She mentioned the risk of decreased quality as a result of increased output, asking faculty to be mindful of such risks. She suggested building in structures that provide students with flexibility (e.g.: flexible deadlines) and making sure students feel supported in their efforts. Erica also encouraged faculty to be mindful of designing for accessibility, especially due to the fact that many students are not comfortable advocating for their needs in this realm.

Recommendation: Communication

To close the conversation, students discussed how to move forward in the context of advice for students and faculty. Levi emphasized the importance of “thinking for yourself” and not offloading critical thinking or learning. Natalia mentioned the importance of learning about the technology in a way that enables informed navigation of a world in which AI is present. Erica mentioned the importance of student support and designing for accessibility. Bella capped the conversation with a call for increased communication in all forms: keeping the door open for discussions that promote empathy, understanding, and learning. All agreed, and ultimately the consensus was clear: the solution to the AI challenge is not just more strict policies, but more honest, empathetic communication. As we all grapple with this rapidly evolving technology, the focus must remain on maintaining educational integrity while preparing students for an AI-integrated world.

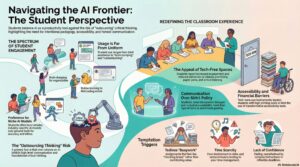

Summary Infographic

Navigating the AI Frontier: The Student Perspective. This infographic was generated using NotebookLM. The (deidentified) blog text was entered into a Word doc and uploaded to NotebookLM. The Studio Infographic feature (Beta) was selected to use this source in image generation. The image was then uploaded to Google Gemini to generate alt text.